code-review-graph — Static Analysis via Dependency Graph

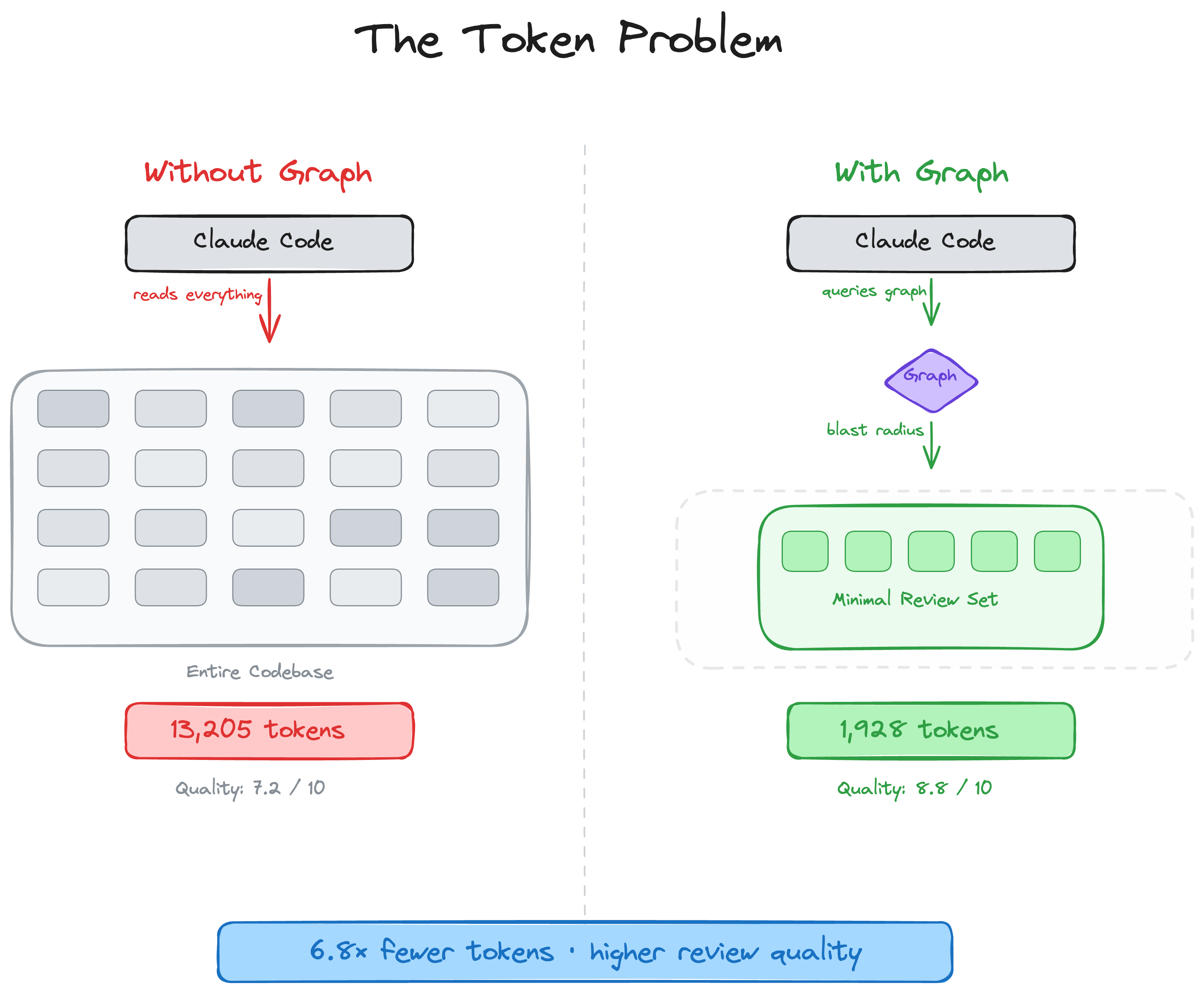

code-review-graph builds a directed graph of your codebase and uses it to run code review. Functions, calls, imports, tests, all connected. When a file changes, the tool traces dependents before touching review logic. Blast radius first.

The storage choice is the part I’d steal first: one SQLite file in WAL mode, holding the graph, metadata, and vector embeddings as binary blobs. No Chroma, no Qdrant, no separate process to keep alive. Incremental updates via SHA-256 mean unchanged files get skipped entirely. On a 2,900-file repo, a full re-traversal runs in under 2 seconds.

Entity names are qualified strings, not UUIDs: src/auth.py::AuthService.login. Readable in logs, no collisions, no lookup table needed.

Flat key-value memory loses relationships. This project makes the case for modeling edges: once you have goal → project → service → activity, you can ask multi-hop questions that flat structures just can’t answer.

Two things I hadn’t seen elsewhere: an eval runner (code-review-graph eval --all) that measures accuracy against real repos, and cron scheduling baked in from the start. Most personal tools ship with no way to know whether they work. Even rough precision/recall numbers change how you think about the project. And scheduling built into the core means recurring analysis is a feature, not a bash script taped to a crontab.